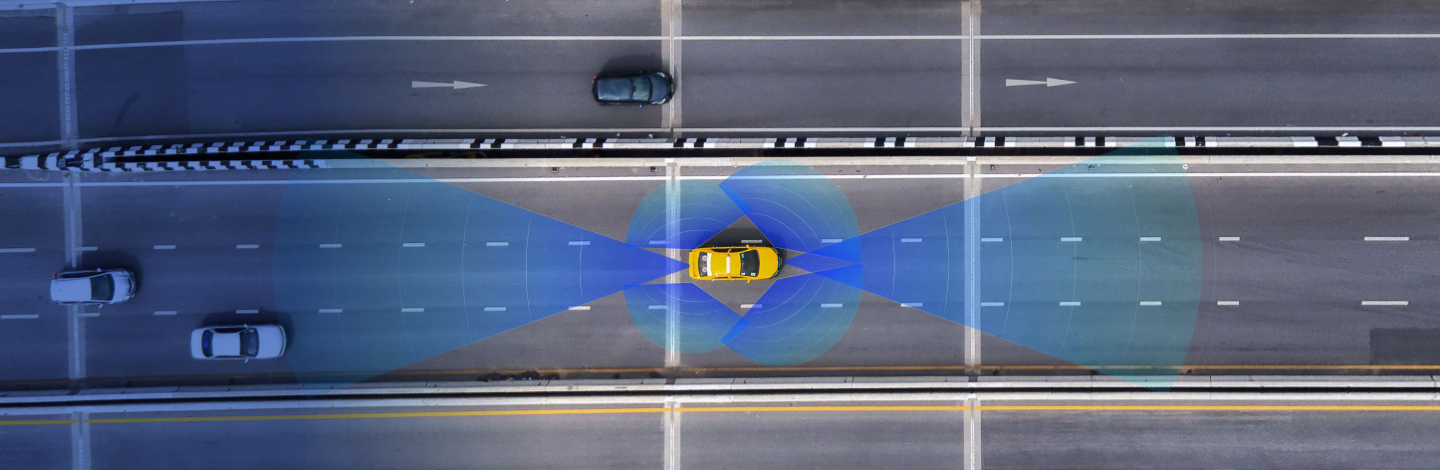

Perception is the ability to be aware, to regard, and to understand. To achieve truly safe autonomous driving, sensors need to go beyond the act of sensing and support true understanding. Perception is critical for safe autonomous driving, and imaging radar is critical for perception.

Arbe brings unparalleled safety to autonomous vehicles through a combination of front and rear perception radars, plus imaging radars at each corner for a full 360° radar-based perception environment. The perception envelope provides an AI-based analysis of the entire vehicle’s surroundings. Integrated perception software facilitates communication between each position, achieving a comprehensive and coherent understanding of the driving environment. Radar is a critical sensor in the perception suite, since radar is the only sensor that functions across any weather and lighting condition, whether penetrating dust, rain, fog, or snow. By infusing it with ultra-high resolution in all dimensions, Arbe repositions radar from a supporting player to the backbone of the autonomous perception suite.

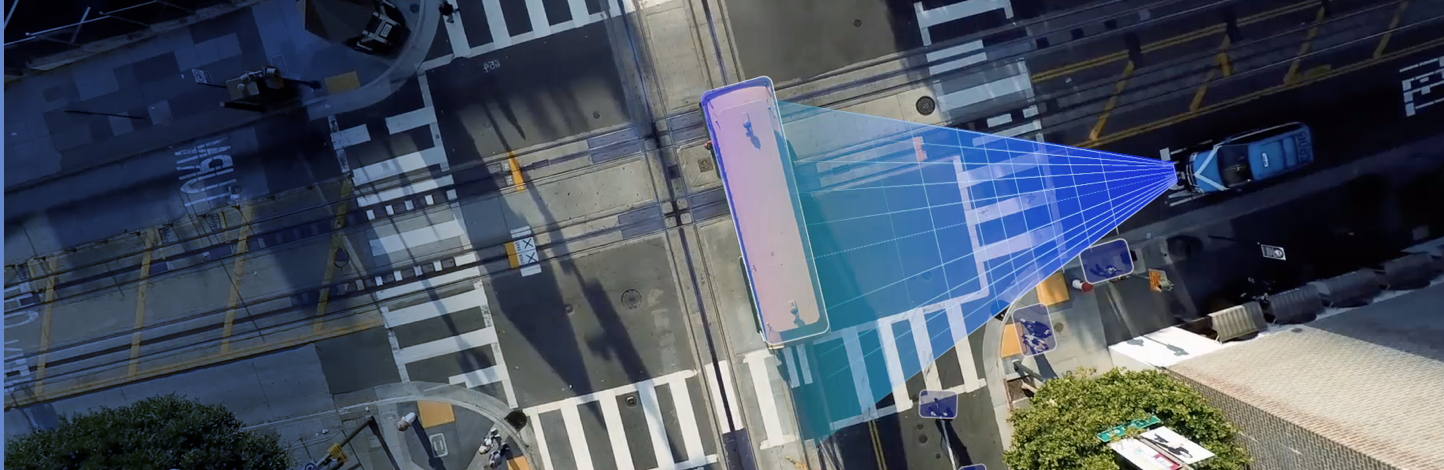

Arbe’s Phoenix and Lynx imaging radars leverage AI algorithms to provide perception data to the vehicle. Recently, Arbe integrated simulation models of these radars with the NVIDIA DRIVE Sim platform to both simplify and improve its development process. DRIVE Sim is an end-to-end simulation platform architected from the ground up to run large-scale, physically based, multi-sensor simulation. It supports autonomous vehicle development by improving productivity and accelerating time to market. By taking advantage of DRIVE Sim, Arbe can bring a better, more thoroughly tested, more easily integrated product to market, sooner.

AI Training, Solved

AI models are supporting of Arbe’s capabilities, including simultaneous localization and mapping (SLAM), perception, super-resolution, and a host of additional next-generation radar developments. However, training these AI models requires mind-boggling amounts of data. The industry rule of thumb is that data must be generated at a rate that is 180 times faster than the sensor’s real acquisition rate – something that is possible with a simulation with the depth and breadth of quality delivered by NVIDIA DRIVE Sim.

Access to the Unusual

DRIVE Sim also makes it easier for Arbe to test uncommon or dangerous scenarios simply and safely. DRIVE Sim taps into NVIDIA’s core technologies, including NVIDIA RTX, NVIDIA Omniverse, and AI, to deliver a powerful, cloud-based simulation platform. It is capable of generating a wide range of scenarios for autonomous vehicle development and validation, including those that are rare or hazardous to encounter in the real world. Without it, intentionally testing Perception Imaging Radar’s capabilities in real life for situations like accidents, running red lights, driving at very high speeds, or a child bolting into the street would not only be difficult, but unethical.

When tested in a virtual environment, we ensure that these problematic events are examined from every angle – choosing which road participants are present, defining unexpected weather and lighting conditions, and even entering randomization into the scene to find corner cases. DRIVE Sim helps us ensure that our Perception Imaging Radar solution has been evaluated to the highest standard. And because the simulator includes lidar and camera sensors in every scenario, Arbe has the information we need to train and format our radar data in a way that makes sensor fusion as seamless as possible.

Risk-Free Evaluation and Development

NVIDIA supports our DRIVE Sim customers directly. Arbe’s sensor models are built into the DRIVE Sim platform and can be available to OEMs and tier-1 suppliers for training and validating their perception algorithms. The synthetic radar sensor is as realistic and close to Arbe’s Phoenix and Lynx systems as possible. Furthermore, just as DRIVE Sim saves Arbe training time and effort, it does the same for our clients, whose perception developers can create their algorithms and enhance their perception capabilities without many of the test drives that used to be necessary, even before hardware is made available.

Teaming Up To Achieve Autonomy

“The ability to train, develop and validate imaging radar sensors in simulation is critical to the development and validation of autonomous technology,” said Zvi Greenstein, general manager of automotive at NVIDIA. “NVIDIA DRIVE Sim enables Arbe and its customers to make big strides in deploying this technology for autonomous vehicles — and is supercharging Arbe to advance this process and the future of transportation as a whole.”

Connect to learn more© Arbe , All rights reserved